Claude Code ROI Calculator

A Claude Code plugin that reads your session history and produces an exec summary of your net ROI, what's working, and concrete suggestions for getting more out of Claude Code.

Timeline • Role

2025 • Built with Sebastian Tremblay

Problem

Most developers using AI coding tools have no idea whether they're actually saving time. They feel productive in the moment, but can't answer a simple question: is the subscription paying for itself? Without data, the ROI of AI assistance is pure intuition—and intuition is notoriously bad at accounting for the sessions where Claude burned an hour going in circles.

Solution

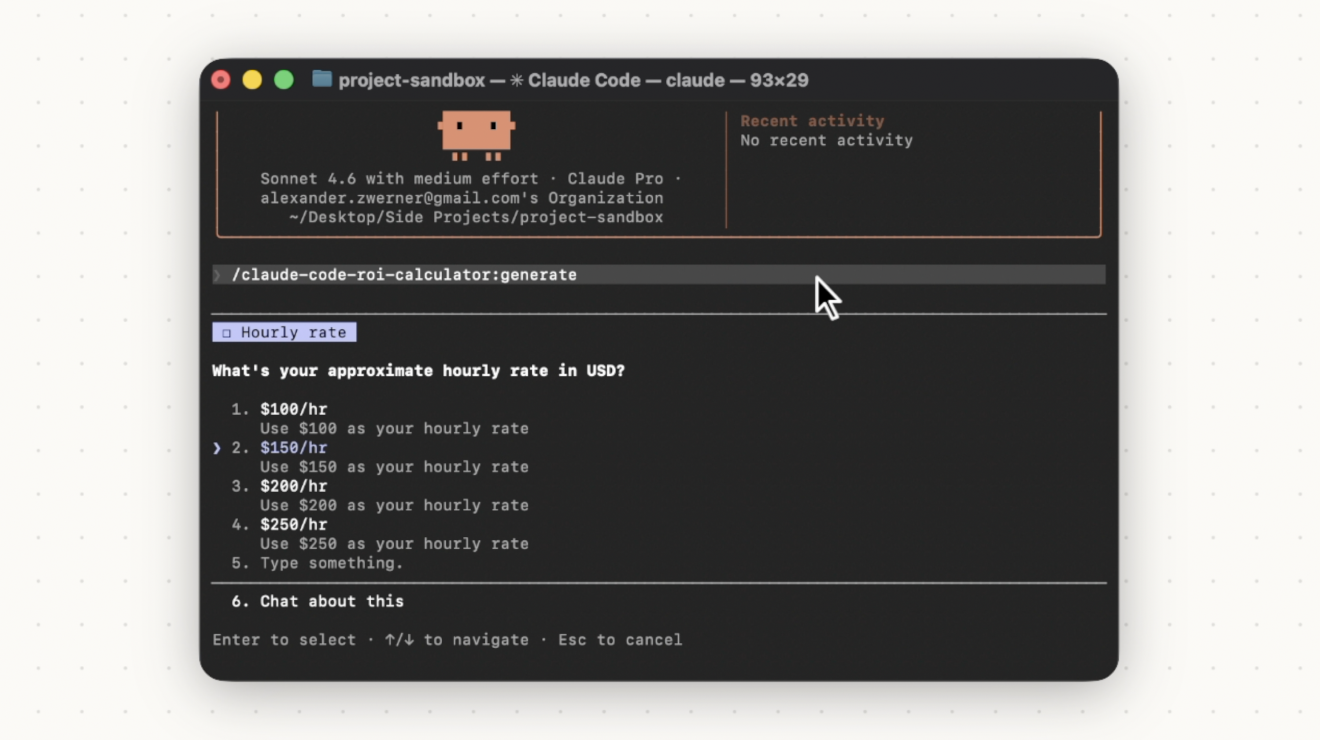

ROI Calculator is a Claude Code plugin that reads your session history for the current project and produces a short exec summary with your net ROI, what's working well, and concrete suggestions for getting more out of Claude Code. One command. Everything stays local.

Part 1: Understanding the problem

This started as an experiment with Sebastian Tremblay to see whether Claude Code's session data contained enough signal to estimate your actual return on investment. The .jsonl logs in ~/.claude/projects/ turned out to be a dense, structured record of session activity, task patterns, and time signals. That was enough raw material to build something real.

Part 2: Coming up with ideas and solutions

The pipeline is split into two deliberate stages. A Python CLI reads the session files and extracts usage indicators fully deterministically—no LLM involved in this step. Claude then reads the grouped signals, estimates time saved per session, maps it against subscription cost, and writes the ROI summary. Keeping the extraction deterministic means the LLM only sees structured input, which makes the output more reliable.

Part 3: DECIDING + PROTOTYPING

Privacy was a hard constraint throughout. Everything runs locally. Any scratch files written during processing are deleted after Claude reads them. Nothing is sent anywhere beyond the Anthropic API call that generates the summary—and that call only sees the structured signals, not raw session transcripts.

Part 4: ROUND OF TESTING AND IMPROVEMENTS

The tool works best when you use plan mode or write messages describing intent. Silent sessions don't produce much signal, so the quality of the output is roughly proportional to how explicitly you narrate your work. That's a real limitation, and worth being upfront about: this isn't a replacement for proper time-tracking or engineering metrics. It's a last-mile tool for the gap between 'I think Claude Code is useful' and 'here's the actual number.'

Reflections

The experiment confirmed the hypothesis—session data does contain enough signal to produce a credible ROI estimate. What we didn't expect was how useful the 'what's working' and 'suggestions' sections would be relative to the number itself. The ROI figure answers a yes/no question most developers already suspect the answer to. The patterns and recommendations are where the tool earns its keep.